If your solution can’t solve an issue quickly enough, your web application is probably condemned to failure in the competitive industry of online apps. With the introduction of big data and streaming data, organizations must swiftly comprehend data changes and make choices in real-time, which can mean the difference between winning or losing a significant sale to rivals. Regular performance testing in software testing during the development cycle guarantees that the final product is as responsive, scalable, and reliable as feasible during the whole year. Additionally, correct sizing will guarantee that customers have access to a solution that will consistently meet their needs.

Performance testing – what is it?

The word “Performance testing” refers to a wide range of testing. A series of tests that evaluate the duration for users’ gestures (transactions) for a single user or extremely modest loads are frequently used to oversimplify the field of performance testing. But just a small portion of performance testing is covered by that kind of testing.

Another common misunderstanding is that performance testing in software testing should only be scheduled later in the development cycle. As a result, little consideration or preparation is given to performance at the outset of an effort. Performance testing that is late planned frequently has poor scaling characteristics, late gating errors, and unsatisfactory performance. Late in the development cycle, performance issues can be exceedingly difficult to fix and frequently call for a change in technology or architecture.

All performance-related factors must be considered throughout the planning phase of the overall development release cycle. We should think about how we want the application to grow and perform and what level of robustness is necessary in addition to what the program will do. Do we want the application to be able to be deployed on various nodes so that we may add nodes when we need additional throughput? How quickly do we need the outcomes? Exist any overarching criteria or service level agreements (SLAs) that must be fulfilled? Are expected uptimes for the application present? To make sure that the technologies and designs selected will meet those expectations, all these elements should be taken into account as early in the design and architecture of the application as possible.

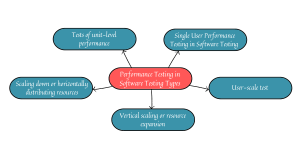

Performance Testing in Software Testing Types

Tests of unit-level performance

A measurable measure of the amount of time it takes to execute a piece of basic code at the component or even code level. Developers frequently use this kind of testing to quickly test updates to their code to make sure performance degradations are not created.

The following are some examples of the tests’ power:

- Quick execution time.

- Giving the developer feedback almost instantly.

- Usually, the test environment needs the bare minimum of components.

- Suits a continuous code delivery system nicely.

- Run regularly.

- Relatively simple to build and keep up.

Developers frequently write and maintain these kinds of tests in the same language as the source code. Since the tests can be written rather quickly, one can produce a large number of them to guarantee complete code coverage.

Single User Performance Testing in Software Testing

Single-user or light-user load testing is one of the more popular performance tests. For superior statistical results, this testing method runs a set of user activities across several rounds.

The most often monitored key performance indicators are mean, median, 90th percentile, and maximum values. Either a baseline set of values or a specified set of values that are regarded as acceptable are used to compare the outcomes. Single-user tests are typically easy to run quickly, and they are great for identifying software bottlenecks or sluggish code execution that is not caused by a lack of system resources. Resources are not the throttling points for this form of testing because it doesn’t overtax the system. However, resource metrics and different application and system logs should still be watched for confirmation. These testing may be automated using in-house developed test tools, freeware performance test tools (like Jmeter), or licensed performance test tools (e.g. Loadrunner).

Each test equipment type has advantages and disadvantages:

- The best tests are frequently those that are created from scratch, but they must be regularly maintained, both the tests and the test tool.

- The use of the free tool is cost-free, but it might lack some features or capabilities, in which case it might need to be enhanced, or its restrictions understood.

- The license tools typically cost more money but provide far better support for the product and its use.

The best test tool strategy should be chosen after doing a thorough cost/benefit analysis.

User-scale test

Understanding how your program or software operates as more users are added to a limited system is crucial. An excellent example is performing a user scale test to see how the performance changes as more users are introduced to the system while sizing an environment for a proposed number of end users. A linear profile would be a desirable outcome because it would facilitate extrapolation and interpolation estimation.

A series of individual tests can be used to increase the number of users proportionally for each test, for example, 0.5 users per core, one user per core, two users per core, etc.

Another approach may be to run several users, wait for a length of time to pass or to reach a steady state, and then gradually ramp up the number of users. Staged or step-up performance testing are common terms used to describe this.

This kind of testing can be used to quickly identify delayed code execution under lighter loads. But when the number of users increases, resource limitations may become clear (e.g. locks, threads, memory, etc.). This will enable developers to assess whether their application utilizes the limited resources to the fullest extent possible or not. By subjecting an application to excessive loads, this kind of testing can determine the upper limits of the application, what happens at those limits, and where governors may be needed.

To better understand the scaling characteristics of the application and system, system metrics, application logs, and transaction response metrics should be closely monitored and correlated while running user scale tests.

Tools such as Loadrunner, NeoLoad, Jmeter, Rational Performance Tester, and K6 are a few examples of the various kinds that can be utilized.

Vertical scaling or resource expansion

A business application must have the ability to vertically scale, which means that when server resources are added, the performance of the program increases roughly proportionately.

As an illustration, doubling the amount of CPUs and RAM would improve user transaction performance, maybe by a factor of two, while doubling the number of resources and interactive users would not affect performance. Running a series of tests with various user loads before and after adding the resources is an easy method to test this.

Scaling down or horizontally distributing resources

Many applications allow for the distribution of individual components or the entire application over various servers. The ability to distribute an application enables businesses to scale up the application, customers, data, etc., using smaller, less expensive hardware. One can plan and carry out a scaling test to see how much adding hardware affects performance or scale to make sure an application can benefit from more hardware’s performance.